modalities.fmri.glm¶

Module: modalities.fmri.glm¶

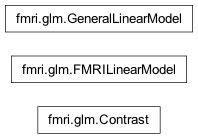

Inheritance diagram for nipy.modalities.fmri.glm:

This module presents an interface to use the glm implemented in nipy.algorithms.statistics.models.regression.

It contains the GLM and contrast classes that are meant to be the main objects of fMRI data analyses.

It is important to note that the GLM is meant as a one-session General Linear Model. But inference can be performed on multiple sessions by computing fixed effects on contrasts

Examples¶

>>> import numpy as np

>>> from nipy.modalities.fmri.glm import GeneralLinearModel

>>> n, p, q = 100, 80, 10

>>> X, Y = np.random.randn(p, q), np.random.randn(p, n)

>>> cval = np.hstack((1, np.zeros(9)))

>>> model = GeneralLinearModel(X)

>>> model.fit(Y)

>>> z_vals = model.contrast(cval).z_score() # z-transformed statistics

Example of fixed effects statistics across two contrasts

>>> cval_ = cval.copy()

>>> np.random.shuffle(cval_)

>>> z_ffx = (model.contrast(cval) + model.contrast(cval_)).z_score()

Classes¶

Contrast¶

- class nipy.modalities.fmri.glm.Contrast(effect, variance, dof=10000000000.0, contrast_type='t', tiny=1e-50, dofmax=10000000000.0)¶

Bases:

objectThe contrast class handles the estimation of statistical contrasts on a given model: student (t), Fisher (F), conjunction (tmin-conjunction). The important feature is that it supports addition, thus opening the possibility of fixed-effects models.

The current implementation is meant to be simple, and could be enhanced in the future on the computational side (high-dimensional F contrasts may lead to memory breakage).

Notes

The ‘tmin-conjunction’ test is the valid conjunction test discussed in: Nichols T, Brett M, Andersson J, Wager T, Poline JB. Valid conjunction inference with the minimum statistic. Neuroimage. 2005 Apr 15;25(3):653-60. This test gives the p-value of the z-values under the conjunction null, i.e. the union of the null hypotheses for all terms.

- __init__(effect, variance, dof=10000000000.0, contrast_type='t', tiny=1e-50, dofmax=10000000000.0)¶

- Parameters:

- effect: array of shape (contrast_dim, n_voxels)

the effects related to the contrast

- variance: array of shape (contrast_dim, contrast_dim, n_voxels)

the associated variance estimate

- dof: scalar, the degrees of freedom

- contrast_type: string to be chosen among ‘t’ and ‘F’

- p_value(baseline=0.0)¶

Return a parametric estimate of the p-value associated with the null hypothesis: (H0) ‘contrast equals baseline’

- Parameters:

- baseline: float, optional

Baseline value for the test statistic

Notes

The value of 0.5 is used where the stat is not defined

- stat(baseline=0.0)¶

Return the decision statistic associated with the test of the null hypothesis: (H0) ‘contrast equals baseline’

- Parameters:

- baseline: float, optional,

Baseline value for the test statistic

- z_score(baseline=0.0)¶

Return a parametric estimation of the z-score associated with the null hypothesis: (H0) ‘contrast equals baseline’

- Parameters:

- baseline: float, optional

Baseline value for the test statistic

Notes

The value of 0 is used where the stat is not defined

FMRILinearModel¶

- class nipy.modalities.fmri.glm.FMRILinearModel(fmri_data, design_matrices, mask='compute', m=0.2, M=0.9, threshold=0.5)¶

Bases:

objectThis class is meant to handle GLMs from a higher-level perspective i.e. by taking images as input and output

- __init__(fmri_data, design_matrices, mask='compute', m=0.2, M=0.9, threshold=0.5)¶

Load the data

- Parameters:

- fmri_dataImage or str or sequence of Images / str

fmri images / paths of the (4D) fmri images

- design_matricesarrays or str or sequence of arrays / str

design matrix arrays / paths of .npz files

- maskstr or Image or None, optional

string can be ‘compute’ or a path to an image image is an input (assumed binary) mask image(s), if ‘compute’, the mask is computed if None, no masking will be applied

- m, M, threshold: float, optional

parameters of the masking procedure. Should be within [0, 1]

Notes

The only computation done here is mask computation (if required)

Examples

We need the example data package for this example:

from nipy.utils import example_data from nipy.modalities.fmri.glm import FMRILinearModel fmri_files = [example_data.get_filename('fiac', 'fiac0', run) for run in ['run1.nii.gz', 'run2.nii.gz']] design_files = [example_data.get_filename('fiac', 'fiac0', run) for run in ['run1_design.npz', 'run2_design.npz']] mask = example_data.get_filename('fiac', 'fiac0', 'mask.nii.gz') multi_session_model = FMRILinearModel(fmri_files, design_files, mask) multi_session_model.fit() z_image, = multi_session_model.contrast([np.eye(13)[1]] * 2) # The number of voxels with p < 0.001 given by ... print(np.sum(z_image.get_fdata() > 3.09))

- contrast(contrasts, con_id='', contrast_type=None, output_z=True, output_stat=False, output_effects=False, output_variance=False)¶

Estimation of a contrast as fixed effects on all sessions

- Parameters:

- contrastsarray or list of arrays of shape (n_col) or (n_dim, n_col)

where

n_colis the number of columns of the design matrix, numerical definition of the contrast (one array per run)- con_idstr, optional

name of the contrast

- contrast_type{‘t’, ‘F’, ‘tmin-conjunction’}, optional

type of the contrast

- output_zbool, optional

Return or not the corresponding z-stat image

- output_statbool, optional

Return or not the base (t/F) stat image

- output_effectsbool, optional

Return or not the corresponding effect image

- output_variancebool, optional

Return or not the corresponding variance image

- Returns:

- output_imageslist of nibabel images

The required output images, in the following order: z image, stat(t/F) image, effects image, variance image

- fit(do_scaling=True, model='ar1', steps=100)¶

Load the data, mask the data, scale the data, fit the GLM

- Parameters:

- do_scalingbool, optional

if True, the data should be scaled as percent of voxel mean

- modelstring, optional,

the kind of glm (‘ols’ or ‘ar1’) you want to fit to the data

- stepsint, optional

in case of an ar1, discretization of the ar1 parameter

GeneralLinearModel¶

- class nipy.modalities.fmri.glm.GeneralLinearModel(X)¶

Bases:

objectThis class handles the so-called on General Linear Model

Most of what it does in the fit() and contrast() methods fit() performs the standard two-step (‘ols’ then ‘ar1’) GLM fitting contrast() returns a contrast instance, yileding statistics and p-values. The link between fit() and contrast is done vis the two class members:

- glm_resultsdictionary of nipy.algorithms.statistics.models.

regression.RegressionResults instances, describing results of a GLM fit

- labelsarray of shape(n_voxels),

labels that associate each voxel with a results key

- __init__(X)¶

- Parameters:

- Xarray of shape (n_time_points, n_regressors)

the design matrix

- contrast(con_val, contrast_type=None)¶

Specify and estimate a linear contrast

- Parameters:

- con_valnumpy.ndarray of shape (p) or (q, p)

where q = number of contrast vectors and p = number of regressors

- contrast_type{None, ‘t’, ‘F’ or ‘tmin-conjunction’}, optional

type of the contrast. If None, then defaults to ‘t’ for 1D con_val and ‘F’ for 2D con_val

- Returns:

- con: Contrast instance

- fit(Y, model='ols', steps=100)¶

GLM fitting of a dataset using ‘ols’ regression or the two-pass

- Parameters:

- Yarray of shape(n_time_points, n_samples)

the fMRI data

- model{‘ar1’, ‘ols’}, optional

the temporal variance model. Defaults to ‘ols’

- stepsint, optional

Maximum number of discrete steps for the AR(1) coef histogram

- get_beta(column_index=None)¶

Accessor for the best linear unbiased estimated of model parameters

- Parameters:

- column_index: int or array-like of int or None, optional

The indexed of the columns to be returned. if None (default behaviour), the whole vector is returned

- Returns:

- beta: array of shape (n_voxels, n_columns)

the beta

- get_logL()¶

Accessor for the log-likelihood of the model

- Returns:

- logL: array of shape (n_voxels,)

the sum of square error per voxel

- get_mse()¶

Accessor for the mean squared error of the model

- Returns:

- mse: array of shape (n_voxels)

the sum of square error per voxel

Function¶

- nipy.modalities.fmri.glm.data_scaling(Y)¶

Scaling of the data to have percent of baseline change columnwise

- Parameters:

- Y: array of shape(n_time_points, n_voxels)

the input data

- Returns:

- Y: array of shape (n_time_points, n_voxels),

the data after mean-scaling, de-meaning and multiplication by 100

- meanarray of shape (n_voxels,)

the data mean